The Problem with A/B Testing

The Problem with A/B Testing

- February 16, 2015

- By Cyndie Shaffstall

This week we set up an elaborate A/B test on subject lines. I liked How 1.75 Billion Mobile Users See Your Website and my client manager liked Business Cards are No Longer the First Impression. We learned long ago not to be a focus group of two, but our testing also proves something else I’ve been saying for years — A/B tests do not stand alone.

This week we set up an elaborate A/B test on subject lines. I liked How 1.75 Billion Mobile Users See Your Website and my client manager liked Business Cards are No Longer the First Impression. We learned long ago not to be a focus group of two, but our testing also proves something else I’ve been saying for years — A/B tests do not stand alone.

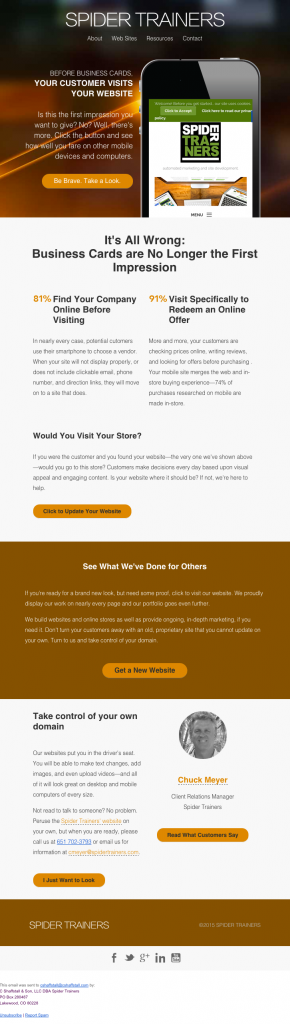

For our Mobile Users campaign, we dropped in an actual screenshot of every recipient’s website as viewed on an iPhone 6 (see image), because we knew this level of personalization could add a sizeable bump to engagement. It’s one thing to tell a recipient their website looks awful on a mobile device; it’s another thing to show them.

At the end of the campaign, we will have sent under 10,000 emails, but before we get to the balance, we felt it was important to know which of the two subject lines would perform better. All of us want to have the very best chance of success, so this was a necessary step. Ensure our subject line would foster a higher open rate.

For our initial test, we sent 600 emails, half to each subject line. One subject line performed best with opens, the other subject line performed best for clicks to the form. What that means is we now have a new question: is it better for us to get more people to open and see the message, or is it better to get fewer people to open, but to have accurately set their expectation about what was inside so they would click?

The open rate differed by more than 10%, and the CTR by about 2%.

Should I stop my analysis here and answer the only question I started with (which subject line should we use), or would it be better to take a look at other factors and try to improve the overall success in any way we can? For me, the problem I see with many marketers’ A/B tests is they ask one question, answer it, and then move on. In fact, many email-automation systems are set up in precisely this manner: send an A/B test of two subject lines, and whichever performs better, use it to send the balance. What about the open rate and the CTR combined? Isn’t that far more important in this case (and many others)? Let’s take it one step further: what about the open rate, CTR, and form completion rate combined? Now we’re on to something.

There are many factors at work here: time of day, past engagement, lifecycle, and more. The subject line is a good place to start, but I can’t afford to ignore what we’ve gleaned from other campaigns.

This then becomes the hardest part of testing—be that A/B or multivariate—isolating what we’ve actually learned, and that usually means I cannot analyze just this one campaign. It must be an aggregate.

For our campaign, I took our test results and put those into a spreadsheet of 2014 campaign results and started to look for patterns. We’ve all read Thursday mornings are good (as an example), but does that hold true for my list? Were my open rates affected by time of day, by date, by day, by business type, by B2C vs. B2B? These are all analytics we track because we’ve found each of these does, in fact, influence open rate.

So, yes, we did learn which of the two subject lines performed better for opens, but what we also learned is that a repeat of the test to another 600 recipients on Tuesday morning instead of Thursday morning resulted in almost exactly opposite performance.

A/B tests can be hard. If they were easy, everyone would do them. Our simple one-time test was not enough information to make decisions about our campaign. It took more testing to either prove or disprove our theories, and it took aggregating the data with other results to paint the full picture.

We did find a winner: an email with a good subject line to get it opened, good presentation of supporting information inside, that led recipients to a form they actually completed, and all sent on the right day at the right time, from the right sender,

While you’re not privy to all of the data we have, on the top of the subject lines alone, which do you prefer?

- Post Categories

- Blog